Original Article: https://itbrandpulse.com/industry-first-the-arrival-of-energy-oriented-mlops-and-the-convergence-of-power-and-compute-orchestration/

For decades, data center operations have existed in silos. Compute schedulers focused on performance and utilization. Storage systems optimized for throughput and latency. Networks were tuned for bandwidth and reliability. Power, meanwhile, remained largely external to orchestration logic, treated as a fixed constraint and barrier, not fully considered in day to day optimization. That separation made sense when workloads were predictable and energy was cheap. It breaks down in the AI era.

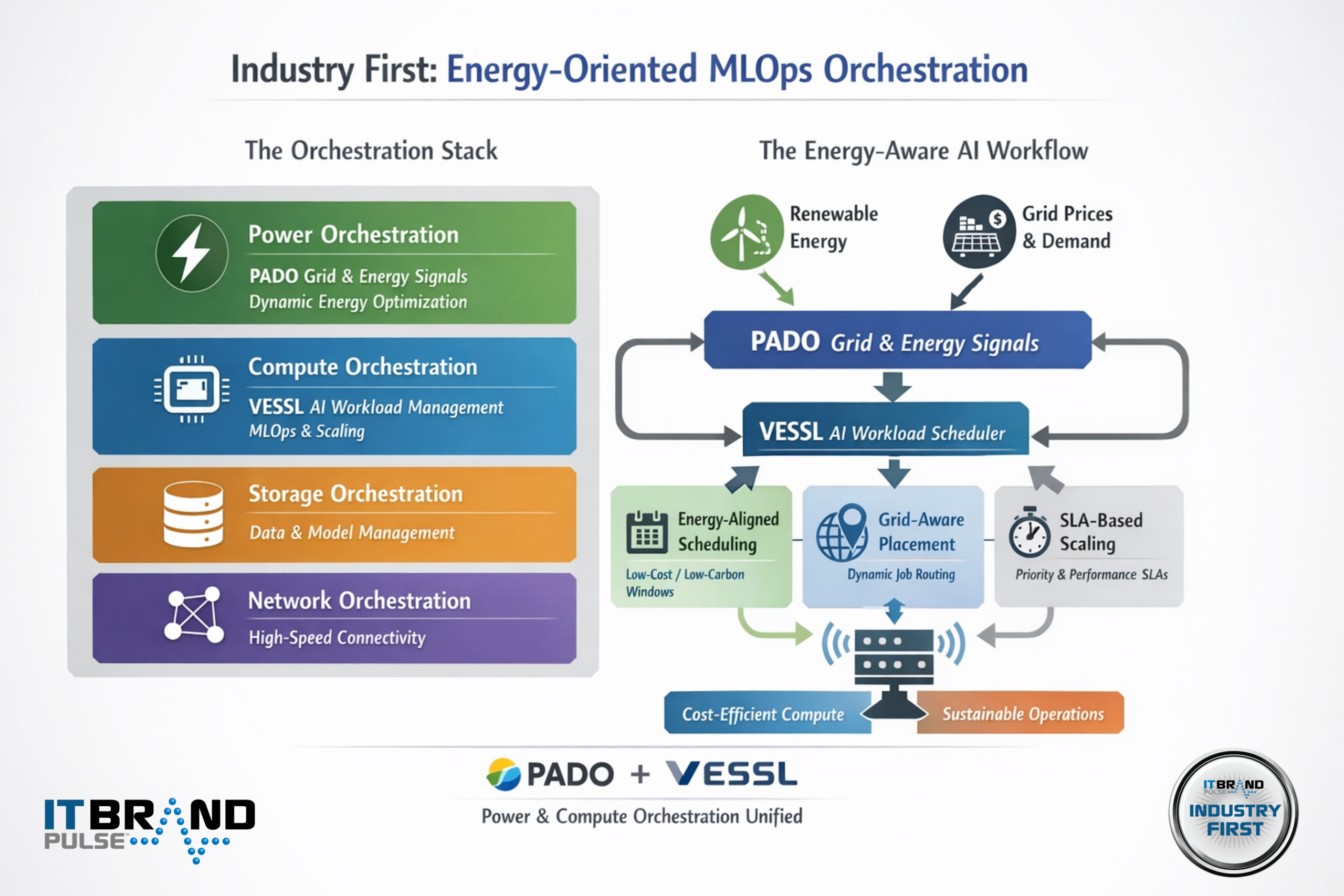

The partnership between PADO and VESSL marks an industry first: the introduction of a true energy-oriented MLOps workflow, where AI workloads are orchestrated not only around compute availability and performance, but around real-time energy conditions, grid constraints, and sustainability objectives. This is not an incremental improvement, it is the first meaningful convergence of power orchestration and AI workload orchestration into a single operational model.

To understand why this matters, it’s useful to first define the major orchestration arenas that underpin modern data centers.

The Orchestration Stack: Power, Compute, Storage, and Networking

Power Orchestration: From Static Constraint to Dynamic Control Plane

Power orchestration has historically lived outside the IT stack, managed through facilities systems and utility relationships. PADO changes that model by treating energy as a software-addressable resource. Its platform ingests real-time grid signals, energy prices, carbon intensity, renewable availability, and demand-response events, and uses AI to optimize around objectives (such as costs, carbon etc.).

Rather than simply reporting on power usage, power orchestration actively shapes demand. It enables data centers to delay, advance, or shift energy-intensive workloads based on grid conditions, participate in energy markets, and reduce exposure to peak pricing. In the AI era, where training runs and inference bursts can swing megawatts in minutes, this capability becomes foundational.

Compute Orchestration: The Brain of AI Infrastructure

Compute orchestration governs how workloads are scheduled, scaled, and executed across CPUs, GPUs, and accelerators. VESSL operates squarely in this domain, providing an MLOps platform that manages the full lifecycle of AI workloads, experimentation, training, deployment, and monitoring, across hybrid and multi-cloud environments.

VESSL abstracts infrastructure complexity so that AI teams can focus on models rather than machines. It optimizes for performance, reproducibility, and resource efficiency, ensuring that expensive GPU capacity is allocated according to priority and policy. Until now, however, compute orchestration has been largely energy-agnostic.

Storage Orchestration: Feeding the AI Pipeline

Storage orchestration ensures that massive datasets and model artifacts are available where and when they’re needed. In AI workflows, storage performance directly affects training time and inference latency. While storage orchestration is not the primary focus of the PADO–VESSL announcement, it remains a critical layer that must eventually align with energy-aware scheduling—especially as data movement itself becomes a significant energy cost.

Network Orchestration: The Invisible Multiplier

High-performance networking enables distributed training, multi-node inference, and cross-region workload mobility. As workloads shift in response to energy signals, network orchestration becomes an enabling force, ensuring that data and compute can move without bottlenecks. Energy-aware orchestration implicitly increases the importance of intelligent networking.

The Industry’s First Energy-Oriented MLOps Workflow

What makes the PADO–VESSL collaboration an industry first is not simply integration, it is intent. For the first time, energy becomes a first-class input into MLOps decision-making. In this workflow, PADO continuously evaluates grid and energy market conditions. VESSL consumes those signals and incorporates them into its scheduling logic. The result is an automated system where AI workloads can be:

- Scheduled during low-cost or low-carbon energy windows

- Shifted geographically based on grid conditions

- Balanced against SLA requirements and model urgency

- Scaled up or down in coordination with energy availability

This is not manual optimization. It is an AI-driven feedback loop where compute demand dynamically follows energy opportunity.

Key Features

Energy-Aligned Scheduling – Training and inference jobs are automatically aligned with favorable energy conditions.

Grid-Aware Placement – Workloads can be routed across clusters or regions based on power constraints and pricing.

SLA-Conscious Optimization – Business and research priorities remain intact while energy efficiency improves.

Economic Participation – Data centers gain the ability to monetize flexibility through demand response and grid services.

Benefits

The benefits are immediate and compounding. Operators reduce operating costs and carbon exposure. AI teams gain access to larger effective compute budgets without increasing infrastructure spend. Utilities benefit from more predictable, flexible loads. Most importantly, AI infrastructure becomes scalable without being reckless in its energy consumption.

Market Impact: A New Category of Orchestration

This industry first signals the emergence of a new category: energy-aware data center orchestration. Over time, this approach will reshape buying criteria for AI infrastructure platforms. Performance alone will no longer be sufficient; orchestration systems will be judged by how intelligently they manage energy as well as compute.

We should expect rapid adoption among hyperscalers, AI service providers, and enterprises operating private GPU clusters, especially as energy costs, grid constraints, and sustainability mandates intensify.

Looking Ahead: 30 Years of Data Center Orchestration

Next 5–10 years: Energy-oriented MLOps becomes standard for large-scale AI deployments.

10–20 years: Data centers operate as flexible grid assets, coordinating compute with renewable generation.

20–30 years: Global AI workloads are orchestrated across continents based on real-time energy availability, carbon intensity, and climate conditions.

The PADO–VESSL workflow represents the first step into that future. By unifying power and compute orchestration, it defines a new operational baseline for AI infrastructure—one where intelligence applies not just to models, but to the energy that powers them.

AI Industry Firsts Validated by IT Brand Pulse

AI Industry First spotlight the breakthroughs themselves, the moments when companies deliver genuine firsts that reset expectations, create new categories, or change how markets operate. AI Brand Leaders voted by humans and validated AI Industry Firsts together tell the full story of leadership in the AI era: who is leading and what is actually moving the industry forward. We invite readers to explore both perspectives to gain a complete view of how innovation and brand leadership intersect.